Digitizing Learning Materials for Anki/SuperMemo: Scanning and Optical Character Recognition (OCR)

Introduction

Relying on any SRS for memorizing/learning means needing digital sources. Having a digital source is even more important if you’re using Incremental Reading in SuperMemo or Anki. In some cases, you need to digitize your learning materials, i.e., turning your source materials into a digital format. Over the years, I’ve learned various tools and ways to streamline and automate these processes. This article is about how I do so for SuperMemo/Anki.

Before you attempt to scan or ocr anything, look for available digital sources

More and more learning materials are available in digital formats. It’s absolutely unnecessary to scan it on your own and then OCR it when a digital format is available. Always look for a digital format first.

Start with your local public library or school library. Almost any university library has various subscriptions with giant collections of eBooks, research papers, reference books etc. These are goldmines you want to search. If you don’t have access to those, there are almost guaranteed paid or free digital formats online. Don’t DIY unless you absolutely have no choice. It’s very boring and time-consuming; believe me, I tried.

Depending on your learning materials, most reference books and research papers are available only in PDF format. eBooks are available in various formats; Choose EPUB or MOBI as they don’t need to be OCR and ready to be imported immediately.

1. Digitizing

Unfortunately, not everything is available digitally. If you have an old book that doesn’t have a digital version, or your professor hands out lecture slides without uploading them online, you need to manually convert them into digital formats. You have 3 options: typing, scanner and camera.

I. Typing

The worst way is typing. Even if you can type 100 words per minute, you can’t possibly sustain this speed throughout. Not only is this exhausting, you’re also prone to errors and mistakes. Although you may start the selection process while typing: choosing what’s important enough to be typed and imported, it’s a lot more efficient doing so after having the whole source. Switching between selecting and typing is just not efficient enough.

II. Scanners

1. Bed scanner

The first scanner I bought was the bed scanner CanoScan LiDE 110. First, if your source material is a book, you will almost miss the parts along the spine. It will take a long time cleaning it up and potentially messing up the OCR process.

The most troublesome part is, you have to:

1. Turn a page

2. Press it against the scanner

3. Press start

4. Wait at least 8 seconds (depending on your setting: color and DPI) for it to finish

Repeat this 100 times if the book has 200 pages. This is the worst. Don’t use a bed scanner. In my opinion, it really doesn’t matter if you buy an expensive one or cheap one. Yes, expensive ones can scan faster, but it still it’s too time-consuming. I’ve done this for a few exam materials. Then I couldn’t bear it and looked for other solutions, which brings me to:

2. Hand-held scanner

I definitely recommend a hand-held scanner over a bed scanner. I have one. Back in high school, I used a hand-held scanner to scan textbooks and import them into Anki. You don’t need to buy one that offers DPI over 600. In most cases, black and white with 300 DPI is good enough. It takes already long enough to scan with black and white using DPI over 600, not to mention over 900. With a high DPI setting, if you scroll too fast, the image will be distorted; too slow and you’ll fall asleep. Imagine spending 10 second scanning one A4 page. Then do it 200 times. NOPE.

A hand-held scanner handles the spine problem better than a bed scanner. Most hand-held scanners have a slim edge designed to solve this problem.

3. Sheet-fed scanner

I haven’t tried any sheet-fed scanner because they are too expensive. If you’re willing to rip your books, a sheet-fed scanner offers the best quality and speed. The output images are well-aligned and crystal clear. You don’t need much post-processing correction.

Bed scanner vs. Hand-held scanner vs. Sheet-fed scanner

Comparison (Generally speaking):

1. Price: Sheet-fed > Hand-held > Bed

2. Quality: Sheet-fed > Bed > Hand-held

3. Efficiency: Sheet-fed > Hand-held > Bed

If you have to use a scanner, I recommend a hand-held scanner. I think it’s the most suitable among the three. It balances well between price and quality. It’s not as expensive as a sheet-fed scanner, while the quality is not too bad for OCR.

III. DSLR and Phone Camera

This guide provides a very good start:

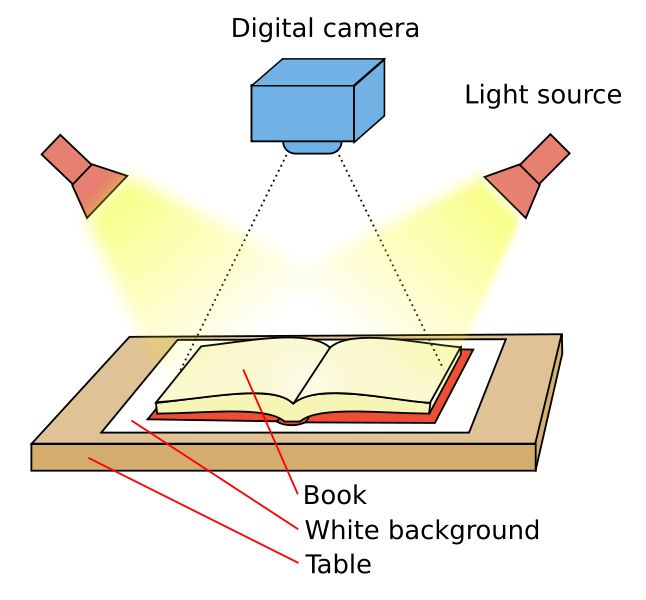

If you have a DSLR or any digital camera, you can set up like the guide suggests. My tips would be get a Bluetooth remote shutter and a tripod.

Most phone cameras nowadays offer good enough quality for OCR. It can be a bit tricky to set up the lighting to avoid shadows.

My setup is very simple: putting my phone over the edge of a stack of books; put something heavy to prevent it from falling; have a flashlight as lighting. I use the app Open Camera in Android. It automatically takes a shot every 5 seconds, which gives me enough time to flip a page.

One advantage of hand-held scanner over camera is that you don’t have to worry about the shadows. An optimal setup can be tricky, but if you can get the environment and lighting right, you probably don’t need a scanner.

2. Processing

Since I’m on Windows, all of the processing software are available in Windows. I’m not sure whether they are available in MacOS or Linux. Other similar alternatives should be just fine.

I. Pre-processing

1. Images from a scanner or camera

Most scanners come with its own software. But the one that comes with the CanoScan LiDE 110 is pretty crappy. So I use ScanTailor. It does a great job in aligning, page splitting and others.

2. PDFs

For PDFs, there are a lot to choose from for splitting a PDF into individual images.

3. Batch Cropping

After alignment and splitting, I use XnView for batch cropping. First, cropping can help reduce unnecessary space that might be mistaken for texts during OCR. Then, there are a lot of unnecessary headers and page numbers that will mess up the OCR process.

If you don’t, they will be interspersed throughout the output texts. Not only is it a hassle having to delete them every now and then, sometimes when you’re reading, it won’t make sense and only later will you figure out “Oh, it’s the header”.

Therefore, check before batch cropping. For most research papers and reference books, the position of the headers is pretty consistent, so you can set the cropping coordinates and do it once and for all.

I use XnView’s batch processing function to crop images with headers and footnotes.

II. Optical Character Recognition (OCR) Software

I’ve tried quite a number of OCR software. Ultimately, I’ve settled down with Abbyy FineReader and hence, it’s the one I recommend. It’s a bit pricey but if you have a lot of PDFs and materials to be OCR, I’d suggest you bite the bullet and get this one. It is simple enough to be used and offers exceptional results. For example, I’ve used it to OCR a 1,000-page college textbook. The result was almost flawless, just with some occasional mistakes, such as mistaking l with 1 and 2 with Z (which I think are forgivable). So yes, 99.9% accuracy for English. But I can’t vouch for other languages.

Another worthy mention is OmniPage Standard. The quality of OCR was pretty identical to FineReader, but it crashed a lot when I was trying. I don’t know if it’s the case now.

I wouldn’t go into details of how I use FineReader as I think it’s not relevant to most people. Here are a few tips that I think are applicable across different software:

1. Exporting Formats

For export, I use Plain Text, so no formatting. I’ve experimented with various formatting options in FineReader. tl;dr: I don’t like them except plain text.

The PDF format is complicated: it’s basically images. The output is usually ugly: lines are chopped; The formatted text size will become uglier when imported into SuperMemo, then extracted or clozed.

2. Set the software to omit illustrations, images, tables etc

I set FineReader to omit pictures, formulae, figures, tables etc when converting. First, there is no point having to OCR them as my export format is plain text. Second, the auto-processing will process the texts insides the table and figures. It’s annoying because when you’re Incremental Reading it in SM, sometimes the text doesn’t make sense. And then when you check you realize it’s from a figure or table.

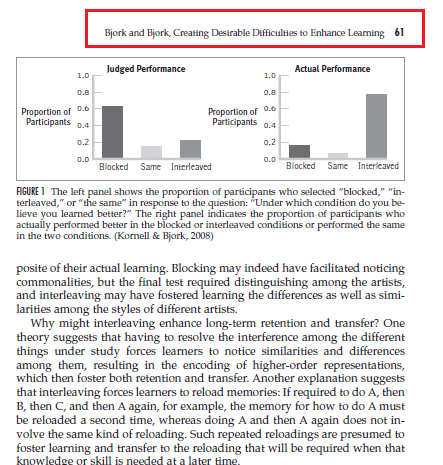

Third, for every illustration, there is a caption. So, during Incremental Reading, when I see “Figure 10 The left panel shows….', I know it’s an illustration. I would then open the original PDF and read it. Yes, I know it’s not the most elegant solution, but I rarely encounter illustrations so it’s good enough for me.

I recommend you do the same: before feeding your PDF to a OCR software, make sure you’ve set your software to omit them.

Post Processing: Using Regex to Remove In-text Citations

This step is optional. It’s mainly for reference books and research papers. In-text citations can be annoying because they take up a lot of space and affect your reading. I don’t read them. Depending on the style and format, these are the regex I find useful and accurate at removing the in-text citation:

\\(\[^\\)\]+?\\d{4}?\[^\\)\]\*?\\)

Only no.:

\\((\\d+(?:,\\s\*\\d+)\*)\\)

I use VScode to eliminate in-text citation. Notepad++ will work just as well. Microsoft Word doesn’t support regex.

No matter how well the regex is, there are always exceptions. For example, there may be brackets containing relevant information other than citations. Therefore, I do a quick manual check before execution.

Closing Remarks

It’s a dream having all the research papers and reference books digitized, then imported into SuperMemo for Incremental Reading. Digitizing your learning materials takes time and practice. It took me many trials and errors to figure out the solutions above. I hope they are useful.